Resources and insights

Our Blog

Explore insights and practical tips on mastering Databricks Data Intelligence Platform and the full spectrum of today's modern data ecosystem.

Most teams that move to Databricks get the hard part right. They migrate the processing engine, rebuild the transformation logic, and stand up Unity Catalog. Then they leave Azure Data Factory running in the background: connected to everything, owned by nobody, and quietly accumulating cost and complexity. In this entry, that’s the gap we address.

Explore More Content

ML & AI

Enforcing Enterprise Naming Conventions in Databricks: The Agentic Way

Naming conventions only work if they're enforced — and a Confluence page nobody reads isn't enforcement. This post walks through using Databricks Workspace Skills to make naming rules executable in Genie Code, then scaling that to a catalog-wide audit agent built with Databricks Apps and DABS. The result is automated, repeatable governance that runs without requiring engineers to opt in.

How to (Efficiently) Process Change Data Feed in Databricks Delta

Databricks AUTO CDC isn't just the easiest way to process Change Data Feed, it's also the cheapest. A benchmark across 25 million INSERT, UPDATE, and DELETE operations found AUTO CDC outperformed Structured Streaming and SQL Warehouse on cost in every run, even as the target table grew to 72 million records. Structured Streaming remains the right choice for custom logic; AUTO CDC wins on standard SCD Type 1/2 patterns at scale.

How to Pass Terraform Outputs to Databricks’ DABS

As teams migrate infrastructure definitions into Declarative Automation Bundles, Terraform still owns the Azure layer — Key Vaults, resource groups, networking. This post walks through a clean, CI/CD-ready pattern for passing Terraform outputs directly into bundle variable overrides, eliminating manual config steps and the environment drift that follows them.

Genie Code Analysis: Two Weeks Later

Databricks Genie Code hit a 77.1% task success rate in production data science workflows — more than double what general-purpose coding agents achieve. But that performance is entirely conditional on the quality of your Unity Catalog metadata. SunnyData's two-week evaluation breaks down what works, what doesn't, and the governance layer you need before you go live.

5 Databricks Patterns That Look Fine Until They Aren't

Five common Databricks coding patterns — including undocumented API calls, manual SparkSession instantiation, and hardcoded Spark configs — that pass code review but fail silently in serverless environments or during platform migrations. For each anti-pattern, this post explains why it breaks and shows the correct native Databricks approach using DABS, the Databricks SDK, and dynamic job parameters.

Databricks Lakewatch: The Future of Agentic SIEM

Databricks Lakewatch replaces the traditional SIEM model with an Open Security Lakehouse — storing 100% of telemetry in open formats at up to 80% lower TCO. AI agents reason across years of unified data to detect and respond at machine speed, closing the visibility gap that legacy SIEMs were structurally forced to create. Early customers include Adobe and Dropbox, with broader availability following Private Preview.

Lakeflow Connect Free Tier: $35/Day Back in Your Budget

Databricks' permanent Lakeflow Connect free tier delivers 100 DBUs per workspace per day — covering up to 100 million records of ingestion at no additional compute cost. For enterprise teams running multiple workspaces, that's over $255,000 in avoided annual costs. This post breaks down the economics, architecture, and what it means for teams still paying a third-party ETL tax.

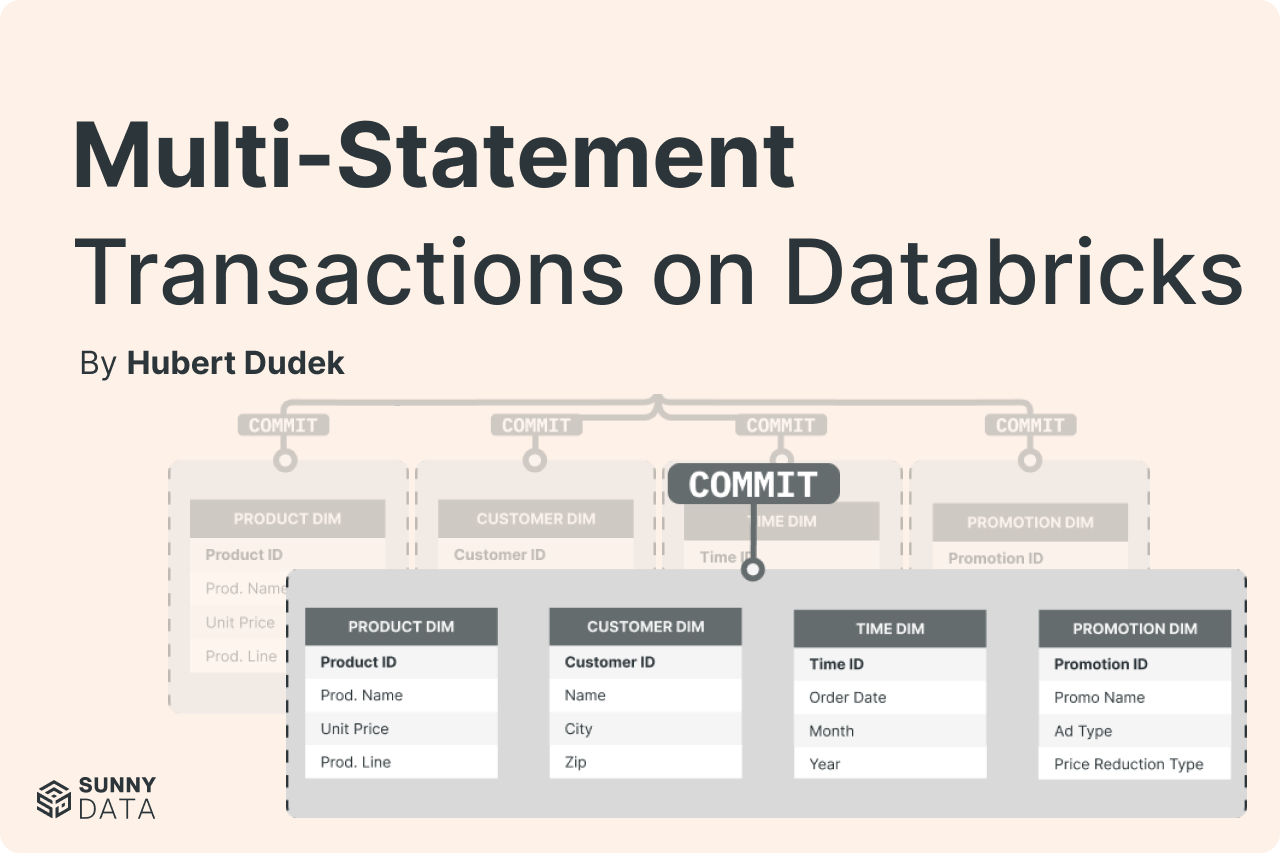

The Lakehouse Finally Has Real Transactions

Learn how Databricks multi-statement transactions use Unity Catalog catalog-managed commits to guarantee atomic updates across multiple Delta tables — with a step-by-step walkthrough.

Deduplicating Data on the Databricks Lakehouse: Making joins, BI, and AI queries “safe by default.”

Learn 5 proven deduplication strategies for Databricks Lakehouse. Prevent duplicate data from breaking AI queries, BI dashboards, and analytics. Includes code examples.

Deploy Your Databricks Dashboards to Production

Stop deploying Databricks dashboards manually. Learn how to use Git, Asset Bundles, and CI/CD for reliable, reproducible dashboard deployments across environments.

The Nightmare of Initial Load (And How to Tame It)

Initial data loads don't have to be nightmares. Discover the split Bronze table pattern that separates historical backfills from incremental streaming.

Temp Tables Are Here, and They're Going to Change How You Use SQL

Learn how temporary tables in Databricks SQL warehouses enable materialized data, DML operations, and session-scoped ETL workflows. Complete with practical examples.

Hidden Magic Commands in Databricks Notebooks

Discover 12 powerful Databricks notebook magic commands beyond %sql and %python. Learn shortcuts for file operations, performance testing, and debugging.

5 Reasons You Should Be Using LakeFlow Jobs as Your Default Orchestrator

External orchestrators can account for nearly 30% of Databricks’ job costs. Discover five compelling reasons why LakeFlow Jobs should be your default orchestration layer: from Infrastructure as Code to SQL-driven workflows.

Databricks Workflow Backfill

Use Databricks Workflow backfill jobs to reprocess historical data, recover from outages, and handle late-arriving data efficiently.