Genie Code Analysis: Two Weeks Later

Most data teams aren’t waiting on better AI. They’re waiting on AI they can actually trust with real work, strong pipelines, accurate reports, sturdy business logic, etc. The question Genie Code forces you to answer isn't "is this impressive?" It's "Can I delegate to it?"

After two weeks of intensive testing across enterprise environments since the March 11 launch, we have a clear answer. It's not what the keynote hype suggested, and it's more interesting.

The Paradigm Shift: From "Assistance" to "Delegation"

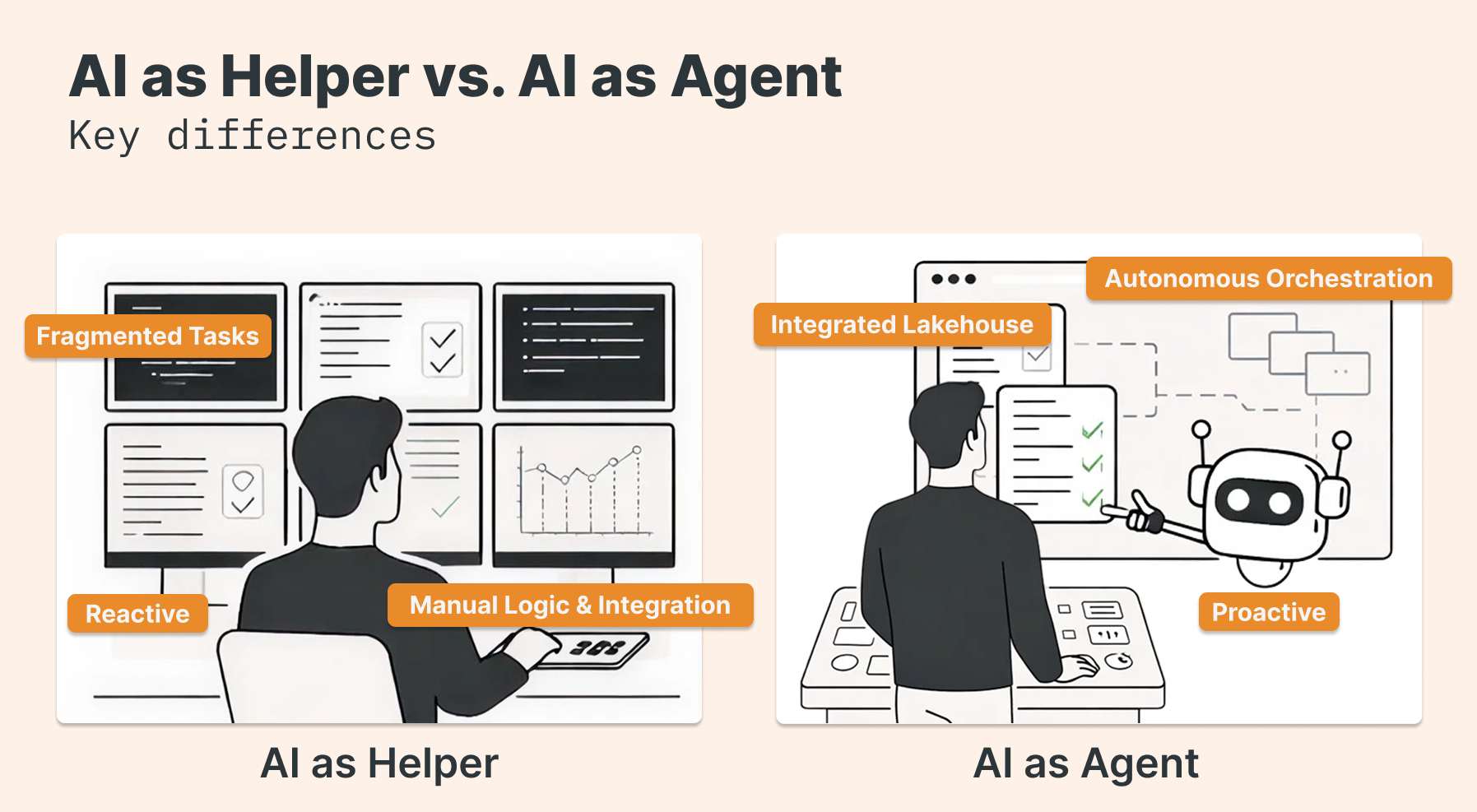

The biggest takeaway after two weeks is that Genie Code is not just a "better autocomplete." It represents a fundamental shift into Agentic Data Work. While traditional assistants are reactive, Genie Code uses multi-step reasoning to plan, execute, and validate entire workflows autonomously within Lakeflow and notebooks.

In real-world data science tasks, this specialized focus enabled Genie Code to achieve a 77.1% success rate, dwarfing the 32.1% seen in general-purpose coding agents. This isn't just about the LLM; it’s about Unity Catalog. Because Genie "lives" inside the catalog, it understands business semantics, lineage, and security policies not as external metadata but as native context.

What It Can Actually Do (And Why It Matters for Your Team)

The features worth your attention aren't the flashiest ones. They're the ones that change what you can safely hand off.

1. Contextual Intelligence & Precision

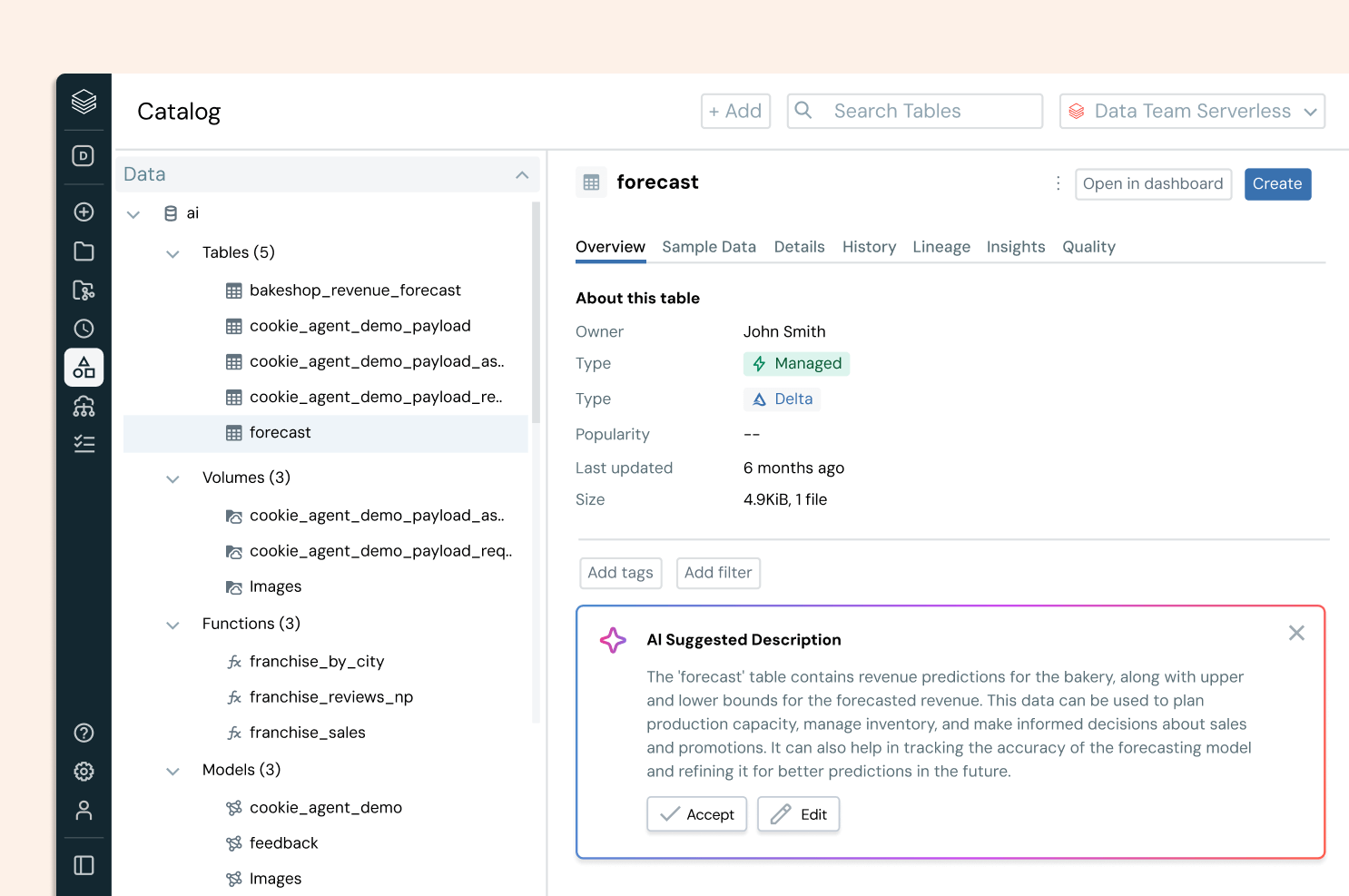

Data Discovery & Assets as Context: Genie doesn't just scan table names; it uses Unity Catalog popularity, lineage, and code samples to surface the most relevant datasets for any analysis. Users can manually specify tables, notebooks, or files as "Assets as Context" to ensure the agent executes tasks with extreme precision.

Custom Instructions: Authors can set persistent, system-level instructions. This ensures that Genie follows specific company authoring methods, avoids forbidden libraries, or adheres to fiscal calendars (e.g., "Always filter for active campaigns by default").

2. Agentic Execution & Planning

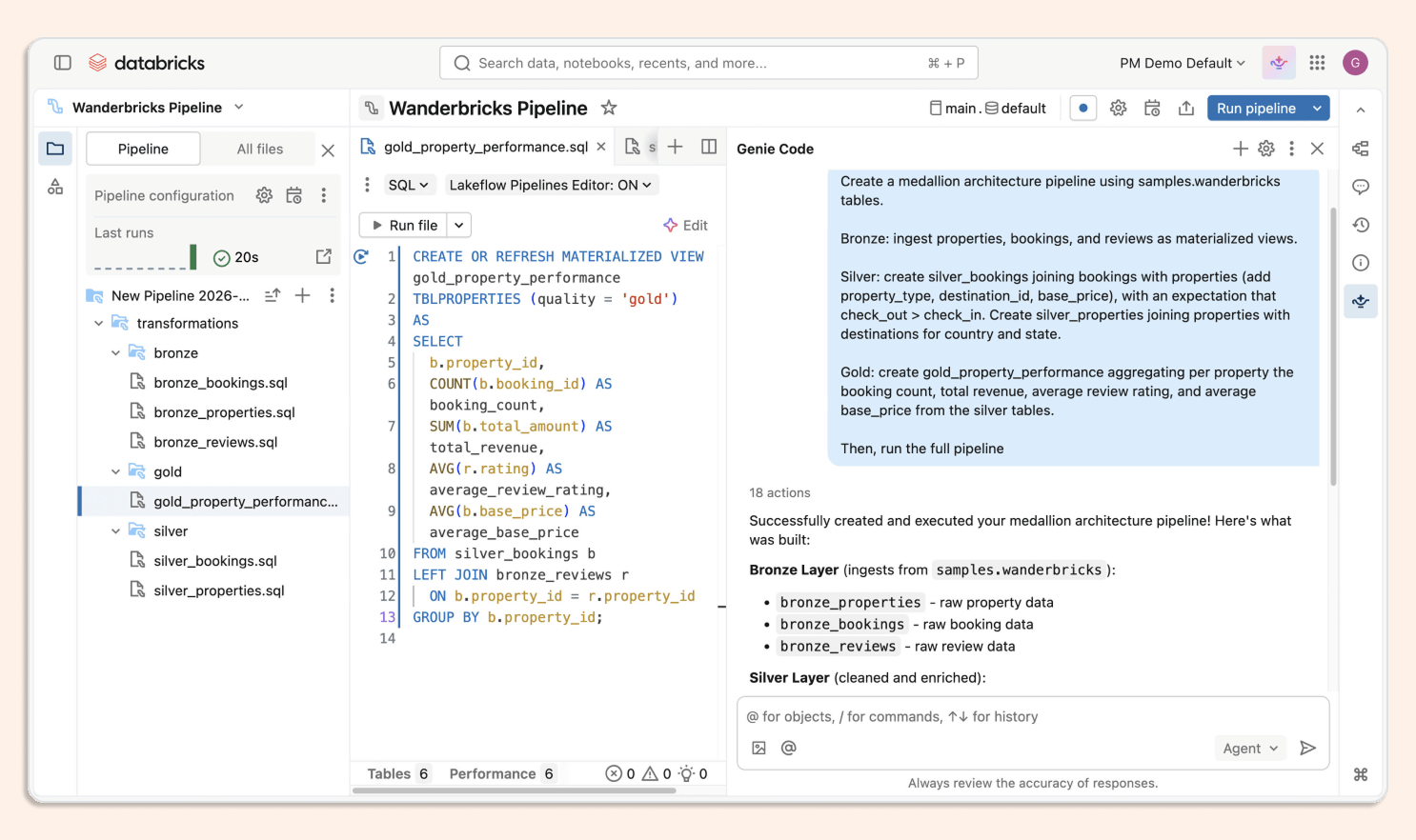

Agent Plan: For complex tasks, Genie doesn't just start coding. It builds a structured plan that the user can review and approve before execution. This provides transparency into its reasoning and keeps the "human-in-the-loop" for critical decisions.

Agentic Coding: This is the heart of the engine. Genie plans, generates, and refines code across multiple notebooks and SQL editors. If a run fails, the agent iterates through the errors, proposing multi-file diffs until the issue is resolved.

Agent Skills: Teams can define domain-specific skills that package best practices (like PII masking or standardized forecasting logic) into reusable workflows that Genie loads on demand.

3. Multimodal & Ecosystem Integration

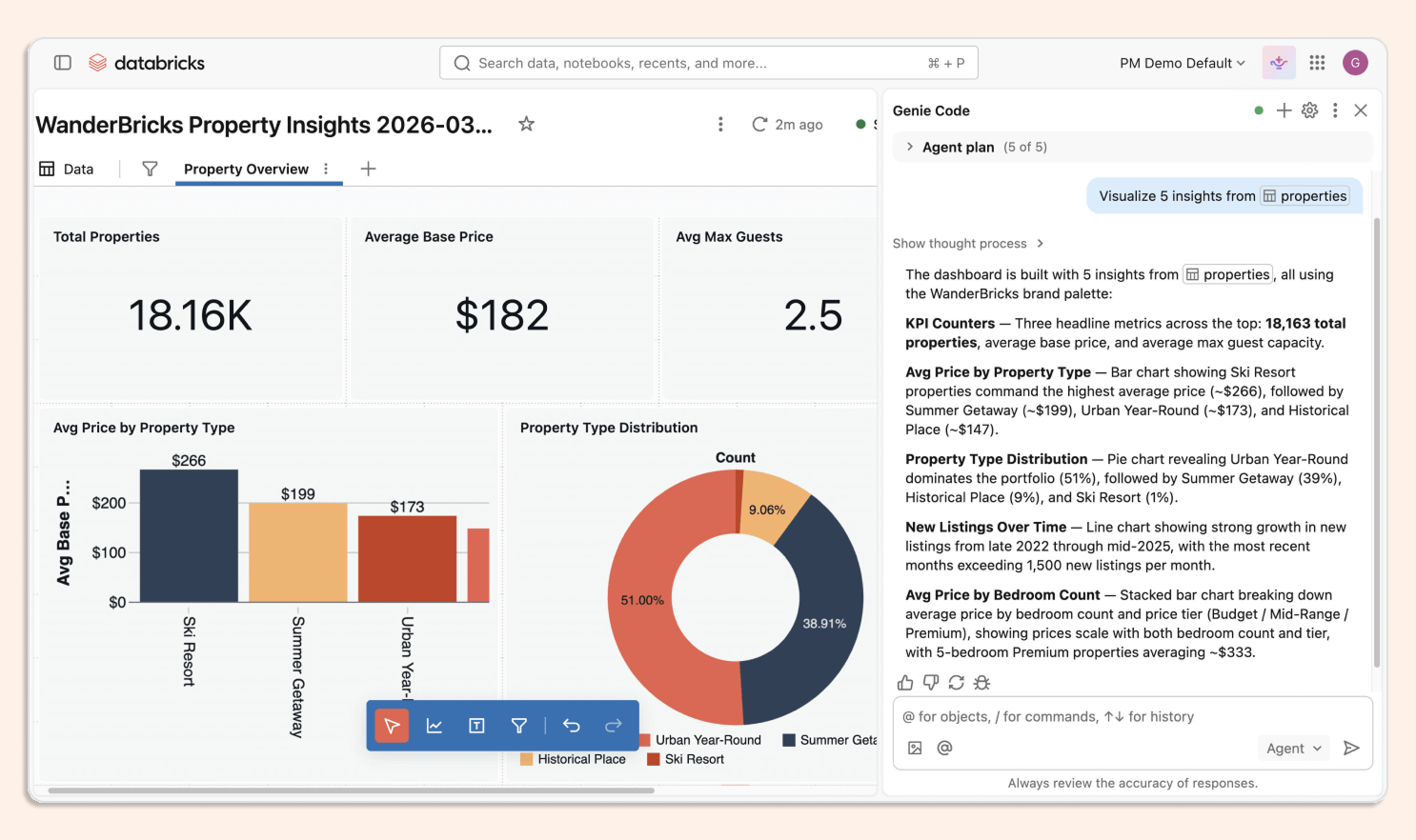

Upload Images: This feature allows users to bridge the gap between "whiteboard and code." You can upload a hand-drawn sketch of a dashboard, and Genie Code will interpret the visual logic to build the actual data model and canvas.

MCP Support (Model Context Protocol): Perhaps the most powerful extensibility feature. Through MCP, Genie securely interacts with third-party tools like Jira, GitHub, Slack, and Confluence. It can gather context from a support ticket or update documentation directly from the Databricks workspace.

The Pros: Where Genie Truly Shines

Beyond the technical features, our two-week evaluation identified four distinct operational advantages that allow Genie Code to outperform general-purpose AI agents in a production environment:

Deep Unity Catalog Integration: By having "insider access," the agent identifies the right joins and table relationships with surgical precision. It doesn't hallucinate column names because it sees the source of truth directly.

Source: Databricks

Autonomous Lifecycle Production: One of the most impressive features discovered in these two weeks is its observability function. Genie Code doesn't just write code; it monitors Lakeflow pipelines and AI models in the background, investigating anomalies before a human intervenes. For example, if a production pipeline fails due to a schema mismatch (e.g., a column changing from INT to STRING), Genie can pinpoint the root cause, validate a fix in a secure sandbox, and propose the final solution.

Source: Databricks

The "SME Multiplier" Effect: In most companies, critical business logic resides only in the heads of a few veterans. Genie Code acts as an SME Multiplier, allowing experts to "pour" their knowledge (KPI definitions, SQL logic, business rules) into the system so it becomes a shared organizational asset that anyone can query 24/7.

Source: Databricks

What Two Weeks in Production Actually Taught Us

Here's where it gets honest. Despite its power, these first two weeks have revealed that Genie Code is not "plug-and-play" magic.

The "Curation Tax is real. Genie Code's effectiveness is directly proportional to the quality of your Unity Catalog metadata. If your organization has poor naming conventions or empty descriptions in Unity Catalog, the agent will hallucinate. Building a "production-ready" space requires a systematic effort of Iteration (0 to 5): defining SQL expressions for business terms and providing verified example queries. If your catalog is a mess, Genie will reflect that back at you at scale. This isn't a product criticism. It's the same dynamic you'd face hiring a brilliant new engineer who has to work with undocumented legacy systems.

Non-deterministic outputs are a real constraint for regulated use cases. As an LLM-based system, Genie Code can be non-deterministic. Small variations in how a question is phrased — or even the order of messages in a thread — can produce different outputs. For financial reporting or anything with audit requirements, you need Trusted Assets and validated query patterns before you go near production. Plan for that governance layer upfront, not after your first incident.

The 30 table limit: Currently, a Genie space is limited to 30 tables or views. For extremely complex domains, engineers must still pre-unify tables into metric views, adding a layer of manual preparation. That's not a dealbreaker, but it's a design constraint that belongs in your scoping conversations, not your post-go-live retrospective.

Strategic Context: The QuotientAI Signal

Source: Databricks

Databricks recently acquired QuotientAI, a specialist in evaluation and reinforcement learning for AI agents. The strategic implication is straightforward: Databricks is embedding continuous self-evaluation directly into the platform. Genie won't just execute; it will monitor its own outputs, flag regressions, and improve based on production traces and user feedback over time. For teams worried about agentic systems drifting in quality, this is the architectural answer. It's worth watching how fast that capability surfaces in the product.

Our Verdict

Genie Code is production-ready, but with conditions.

After two weeks, our conclusion is clear: Genie Code is a high-reward, high-preparation tool. It’s not a magic wand for messy data. Like with many things in data, its success depends entirely on a strong Data Quality Pillar within Unity Catalog.

Final Recommendation: Start by cleaning your Unity Catalog metadata today. Agentic engineering is not magic; it is the result of high-quality metadata combined with a powerful reasoning engine. Those who ignore their data foundations will find Genie to be a costly toy; those who embrace it will find a competitive advantage that defines the next decade of their business.