Resources and insights

Our Blog

Explore insights and practical tips on mastering Databricks Data Intelligence Platform and the full spectrum of today's modern data ecosystem.

Most teams that move to Databricks get the hard part right. They migrate the processing engine, rebuild the transformation logic, and stand up Unity Catalog. Then they leave Azure Data Factory running in the background: connected to everything, owned by nobody, and quietly accumulating cost and complexity. In this entry, that’s the gap we address.

Explore More Content

ML & AI

Enforcing Enterprise Naming Conventions in Databricks: The Agentic Way

Naming conventions only work if they're enforced — and a Confluence page nobody reads isn't enforcement. This post walks through using Databricks Workspace Skills to make naming rules executable in Genie Code, then scaling that to a catalog-wide audit agent built with Databricks Apps and DABS. The result is automated, repeatable governance that runs without requiring engineers to opt in.

How to (Efficiently) Process Change Data Feed in Databricks Delta

Databricks AUTO CDC isn't just the easiest way to process Change Data Feed, it's also the cheapest. A benchmark across 25 million INSERT, UPDATE, and DELETE operations found AUTO CDC outperformed Structured Streaming and SQL Warehouse on cost in every run, even as the target table grew to 72 million records. Structured Streaming remains the right choice for custom logic; AUTO CDC wins on standard SCD Type 1/2 patterns at scale.

How to Pass Terraform Outputs to Databricks’ DABS

As teams migrate infrastructure definitions into Declarative Automation Bundles, Terraform still owns the Azure layer — Key Vaults, resource groups, networking. This post walks through a clean, CI/CD-ready pattern for passing Terraform outputs directly into bundle variable overrides, eliminating manual config steps and the environment drift that follows them.

5 Databricks Patterns That Look Fine Until They Aren't

Five common Databricks coding patterns — including undocumented API calls, manual SparkSession instantiation, and hardcoded Spark configs — that pass code review but fail silently in serverless environments or during platform migrations. For each anti-pattern, this post explains why it breaks and shows the correct native Databricks approach using DABS, the Databricks SDK, and dynamic job parameters.

Hidden Magic Commands in Databricks Notebooks

Discover 12 powerful Databricks notebook magic commands beyond %sql and %python. Learn shortcuts for file operations, performance testing, and debugging.

Databricks Workflow Backfill

Use Databricks Workflow backfill jobs to reprocess historical data, recover from outages, and handle late-arriving data efficiently.

DABs: Referencing Your Resources

Databricks bundle lookups failing with "does not exist" errors? Resource references solve timing issues and create strong dependencies. Complete guide with examples.

SQL: Why Materialized Views Are Your Simplest Data Transformation Tool

Create cost-effective, incremental materialized views in Databricks SQL Warehouse. Includes monitoring tips, best practices, and Enzyme optimization.

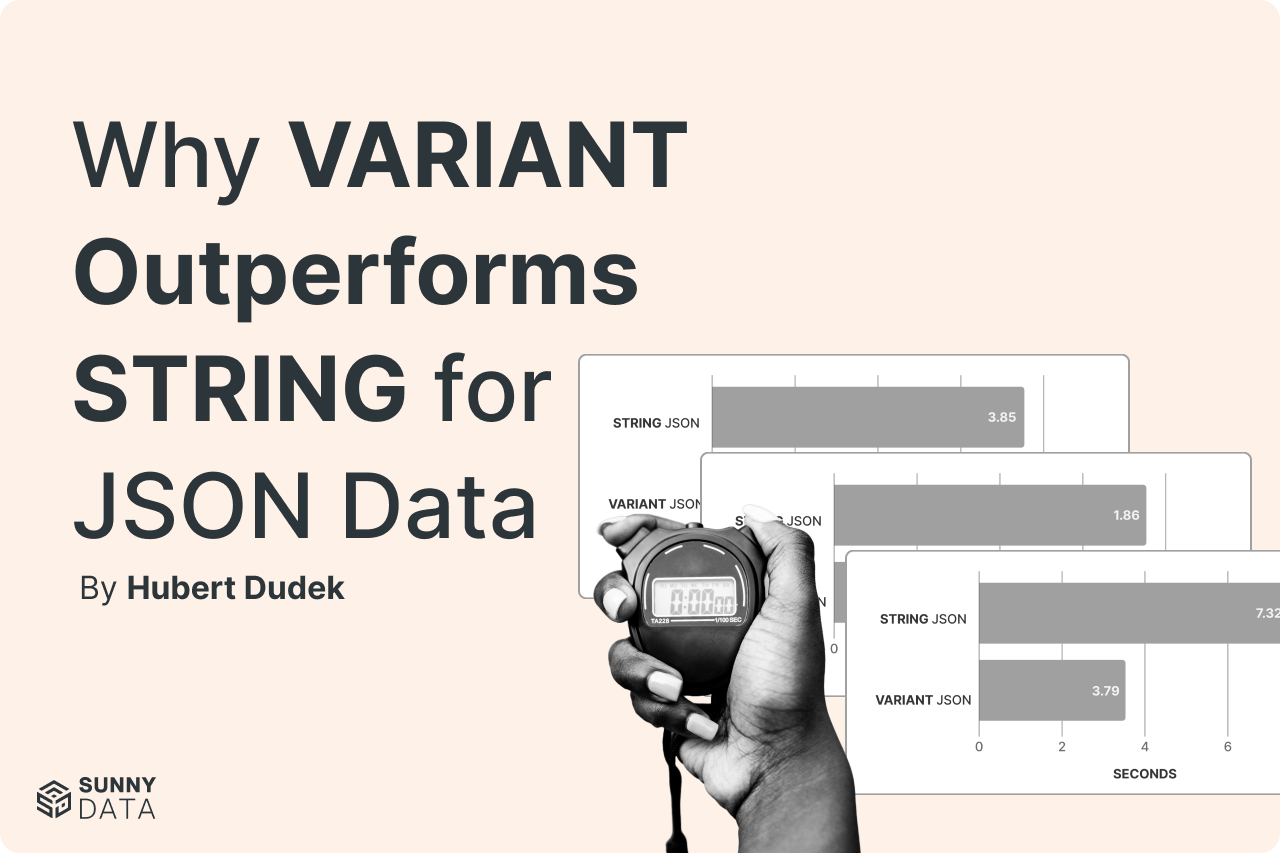

Why VARIANT Outperforms STRING for JSON Data

Check out how Databricks VARIANT outperforms STRING for JSON data with 22% better storage and up to 50% faster queries.

New Databricks INSERT Features: INSERT REPLACE ON and INSERT REPLACE USING

Databricks SQL introduces two powerful new INSERT commands: INSERT REPLACE ON for conditional record replacement and INSERT REPLACE USING for complete partition overwrites. These Delta-native features eliminate complex workarounds while maintaining data integrity. Available in Databricks Runtime 16.3+ and 17.1+ respectively, these commands provide developers with precise control over data updates and partition management in modern data engineering workflows.

Make Joins on Geographical Data: Spatial Support in Databricks

Databricks Runtime 17.1's new geospatial support makes it easy to join geographical data using geography and geometry datatypes. This blog shows you how to map delivery zones, calculate distances, and optimize routes using spatial functions.

CI/CD Best Practices: Passing tests isn't enough

CI/CD pipelines can pass all jobs yet still deploy broken functionality. This blog covers smoke testing, regression testing, and critical validation strategies: especially useful for data projects where data quality is as important as code quality.

Recursive CTE: The beauty of SQL Self-Referencing Queries

Recursive CTEs in SQL: queries that can reference themselves to solve complex problems iteratively. From generating sequences to traversing network graphs and hierarchical data, learn how to eliminate manual looping with SQL solutions.

Data Intelligence for All: 9 Ways Databricks Just Redefined the Future of Data

Discover how Databricks' 9 major announcements at Summit 2025 are democratizing AI with Agent Bricks, Lakebase, free edition, and more game-changing innovations.

Databricks: An Insider’s Perspective with Franco Patano

Josue Brogan interviews Databrick’s Product Owner Franco Patano to discuss Databricks current state and future.