Resources and insights

Our Blog

Explore insights and practical tips on mastering Databricks Data Intelligence Platform and the full spectrum of today's modern data ecosystem.

Most teams that move to Databricks get the hard part right. They migrate the processing engine, rebuild the transformation logic, and stand up Unity Catalog. Then they leave Azure Data Factory running in the background: connected to everything, owned by nobody, and quietly accumulating cost and complexity. In this entry, that’s the gap we address.

Explore More Content

ML & AI

From Informatica to Databricks: What Actually Works in Production

Informatica's new LTS pricing means staying put is no longer free — it's a recurring tax on a platform with a shrinking capability roadmap. This guide covers the full migration path from PowerCenter to the Databricks Lakeflow stack: the CFO-ready business case, a construct-by-construct translation guide, and the five-phase M5 methodology SunnyData runs on every engagement. If your team is weighing IICS versus a full re-platform, the architecture decision and cost model are here.

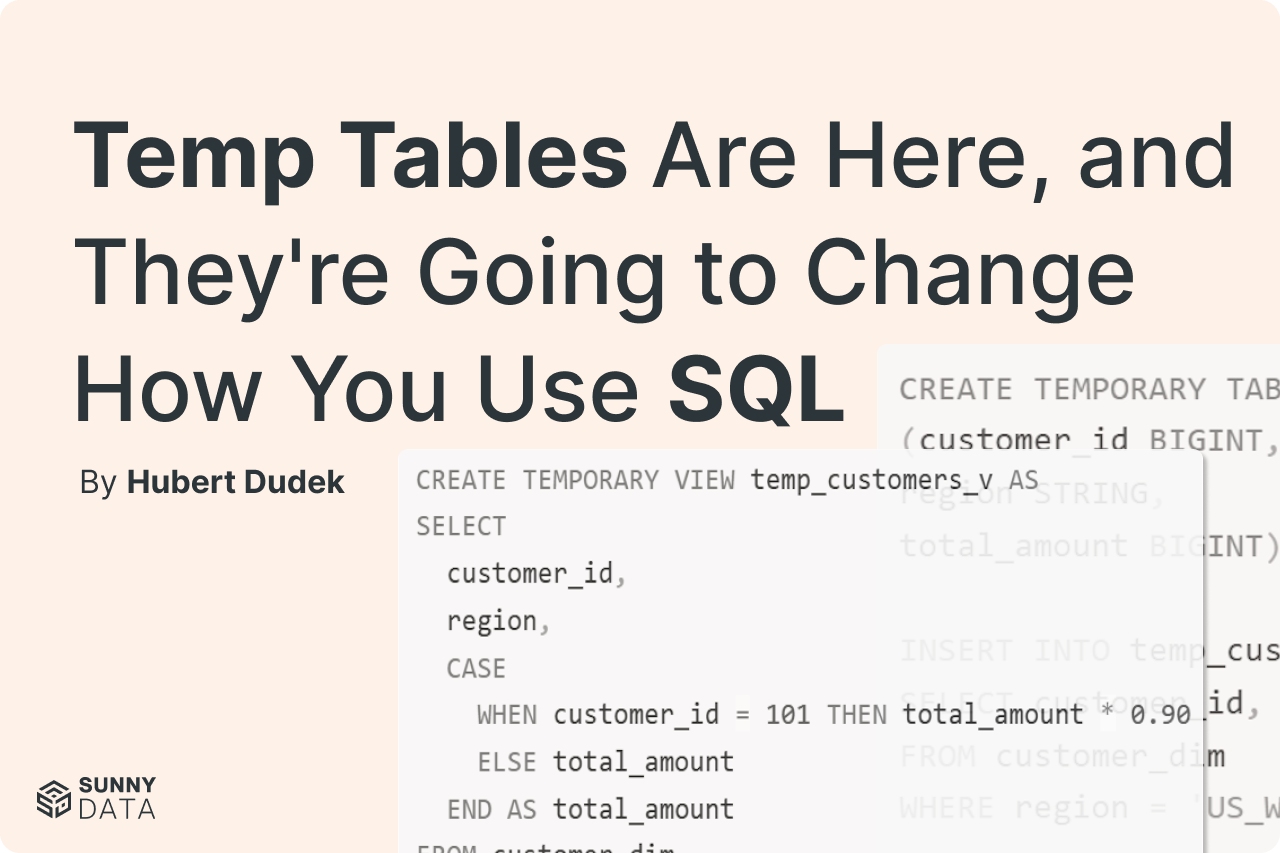

Temp Tables Are Here, and They're Going to Change How You Use SQL

Learn how temporary tables in Databricks SQL warehouses enable materialized data, DML operations, and session-scoped ETL workflows. Complete with practical examples.

Databricks Workflow Backfill

Use Databricks Workflow backfill jobs to reprocess historical data, recover from outages, and handle late-arriving data efficiently.