7 Reasons to Move Out of ADF Now

The case isn't just about cost. It's about direction and the direction ADF is heading isn't where your data platform needs to go.

Azure Data Factory's last public update was August 2024. In the AI era, that's not a minor footnote, it's a signal. Your orchestration layer should be moving at least as fast as your needs/ambitions, and right now, ADF isn't.

If Databricks is already your main platform, keeping ADF alive means maintaining a second platform, splitting your logic, doubling your operational overhead, and betting on a tool that isn't evolving. Here are seven reasons to stop doing that.

REASON 1

The platform has stopped moving

August 2024 was the last meaningful update to ADF's public roadmap. In a time where AI capabilities, governance requirements, and data engineering practices are changing monthly, a stagnant orchestration layer becomes a ceiling, not just a tool. Platform velocity is a product feature. When your vendor slows down, your team eventually does too.

REASON 2

You're paying for an orchestrator you don't need to own

Lakeflow Jobs has a fundamentally different cost model: you pay only for the compute time of the resources you use. No reserved capacity. No separate orchestrator charge on top of everything else.

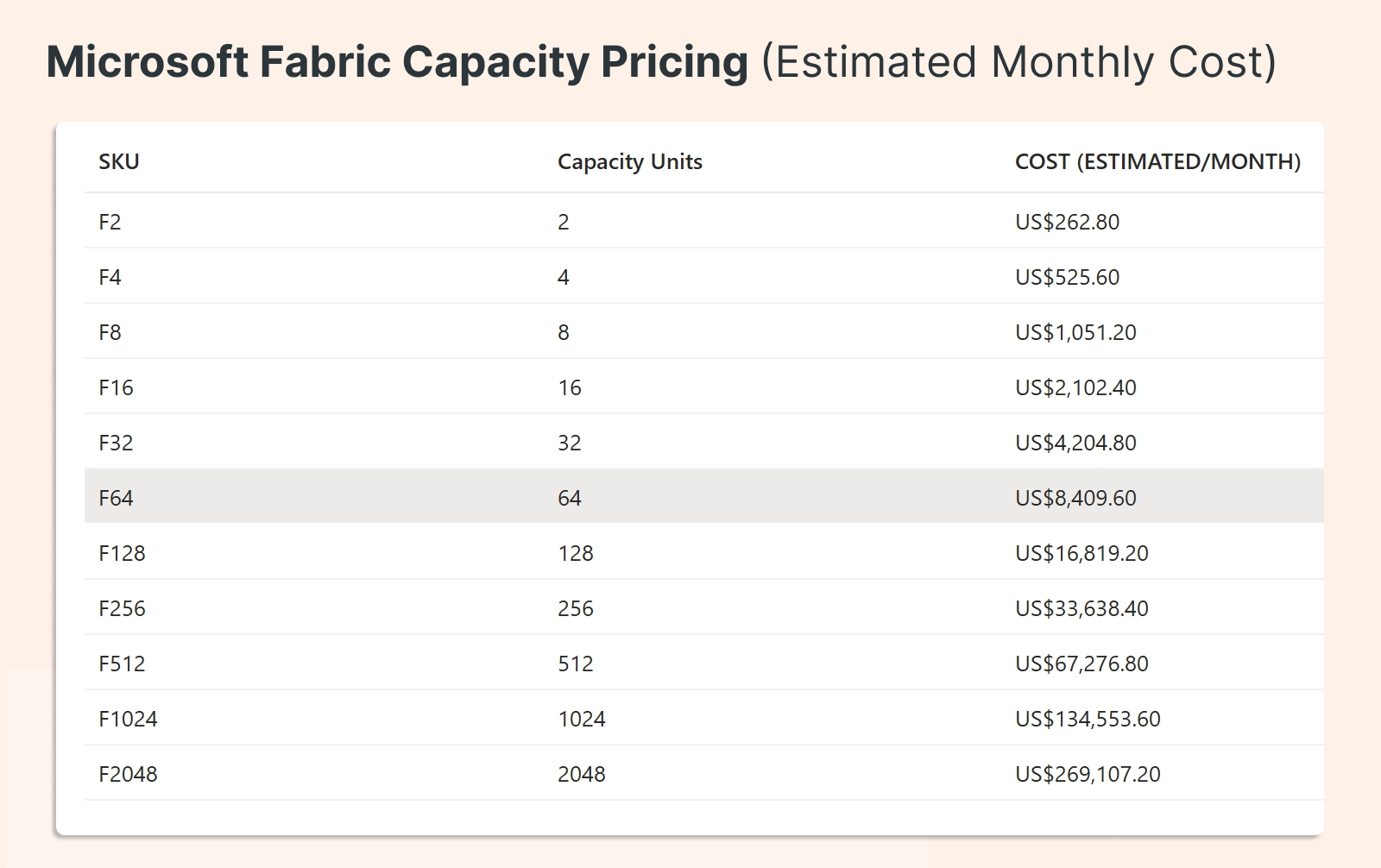

ADF, by contrast, layers cost on top of cost. And if you're considering Fabric ADF as an escape route, be aware: it moves to capacity-based pricing, where you pay for capacity hours, including idle time. That's the opposite of pay-for-work, and it's not obviously better than where you started.

REASON 3

Split platforms create hidden costs

When orchestration lives in ADF, but processing lives in Databricks, the overhead is cognitive as well as financial. Your team context-switches between tools. Incidents span two platforms. Governance and monitoring become duplicated efforts. Fewer tools to manage means faster delivery, fewer handoffs, and fewer things that can go wrong. In the AI era, the higher cost is staying on a platform that doesn't evolve.

REASON 4

Lakeflow Jobs keeps orchestration where the work already happens

One DAG. Built-in triggers, backfills, and monitoring — all native to the platform where your data engineering, AI workloads, and governance already live. When Databricks is your main platform, native orchestration is the logical completion of an architecture that's already mostly there.

REASON 5

Auto Loader is one of the best parts of the migration story

For file-based ingestion (one of ADF's most common use cases), Auto Loader is mature, simple, and purpose-built. Incremental file ingestion rarely needs to be more complex than this, and migrating these pipelines is often the easiest win in the entire transition. If you've been hesitant about the migration effort, Auto Loader is a good place to see just how straightforward it can be.

REASON 6

Most of ADF can be replaced natively

The replacement surface is broader than most teams realize:

Task and job orchestration → Lakeflow Jobs

File, blob, volume, and FTP ingestion → native landing patterns

Incremental file ingestion → Auto Loader

Processing → native compute, SQL, and pipeline logic

Connectors → review case by case; keep only what you truly need

The connectors are usually the last thing to resolve (and the list is almost always shorter than expected once teams look honestly at what they're actually using).

REASON 7

On-prem isn't the blocker you think it is

Many teams have stayed on ADF specifically because of the self-hosted integration runtime. When cloud pull isn't allowed and data must be pushed from on-prem, that constraint feels like it requires ADF. It doesn't.

A push-based pattern solves this cleanly. Databricks Zerobus is a lightweight script you run on-prem that pushes data to the cloud (no ADF required). For a lot of teams, this is the last blocker, and it turns out to be the easiest one to remove.

Quick migration matrix

Where you land depends on your current setup:

Move now if: ADF is mostly orchestration, most data lands as files, and Databricks is already your main platform. This is a straightforward migration.

Move in phases if: You still rely on a few special connectors, or some logic still sits in legacy patterns. Start now, but don't force everything at once.

Replace first, then moveif: Your only blocker is the on-prem runner. Swap it for Zerobus push ingestion, then remove ADF cleanly.

The bottom line

ADF is no longer the safe default. It made sense when it was the center of your data platform. It makes less sense when Databricks already is.

The migration isn't as heavy as it looks, the replacement patterns are mature, and the on-prem blocker is solvable. The question isn't really whether to move but how to sequence it so you move fast without breaking anything.