How an InsurTech Company Achieved Sub-Hour Processing Times from 20 Hour Data Loads

SunnyData partnered with a growing insurtech company serving the Property & Casualty and Workers' Compensation markets to migrate its data workloads to Databricks, implementing a modern Lakehouse architecture with standardized workflows. The result: processing times reduced from hours to minutes, 60% reduction in data infrastructure costs, and a scalable platform that accelerated customer onboarding.

Client name withheld to protect confidentiality

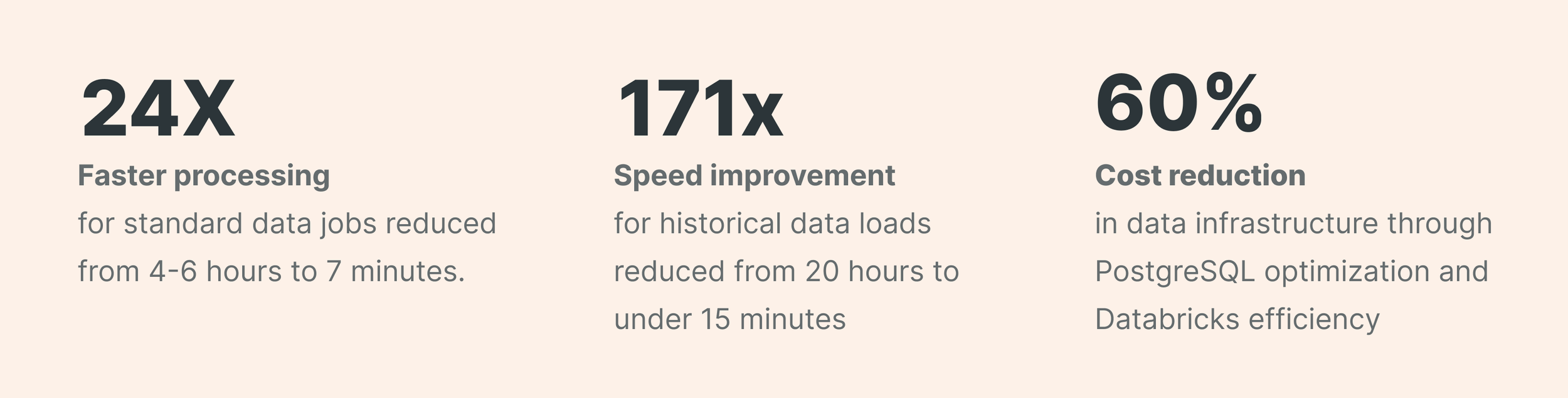

Key Metrics

Client challenges

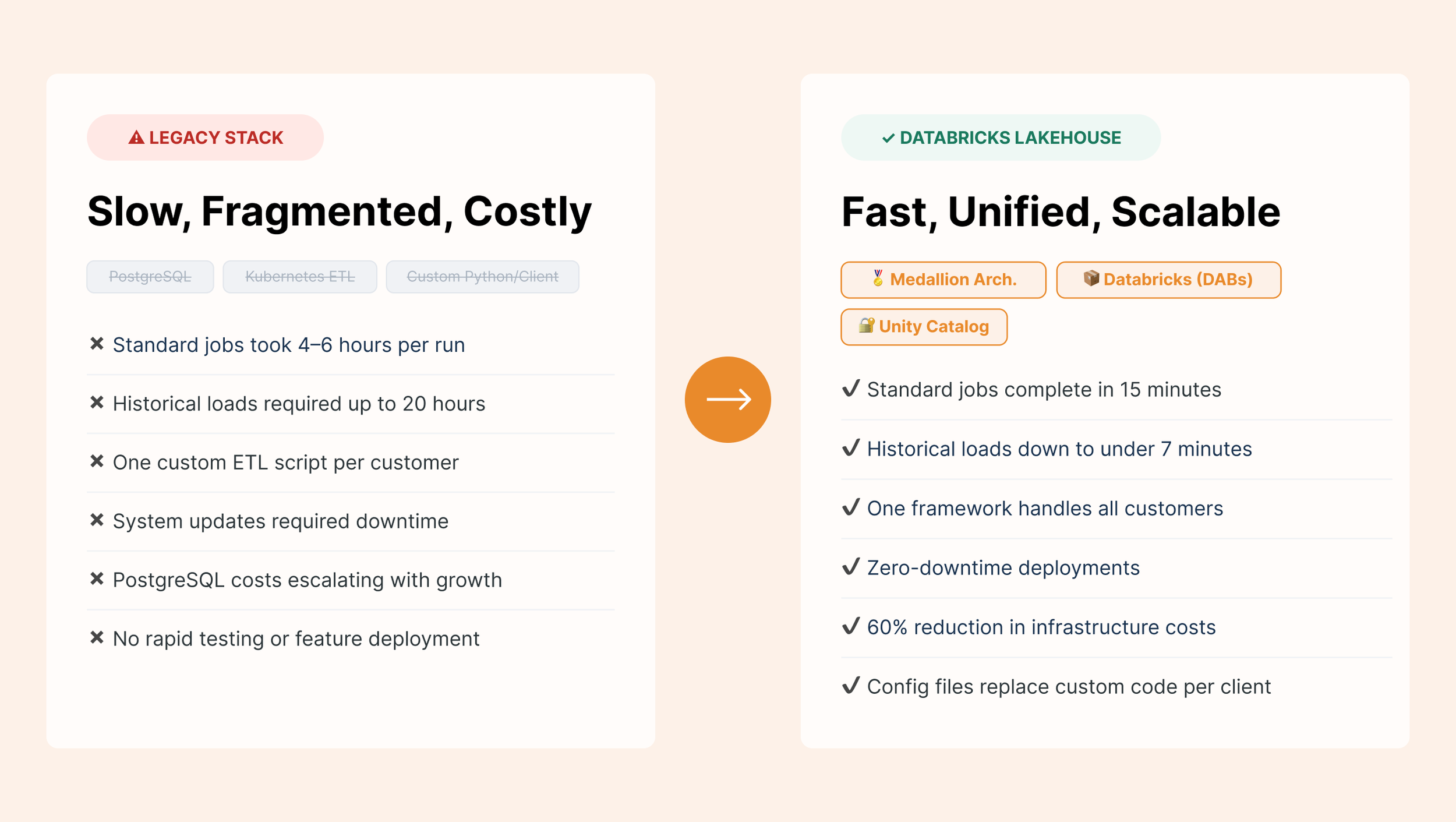

The company had built its initial platform on PostgreSQL, Kubernetes, and Airflow, a solid foundation that served them well in the early stages. However, as their customer base grew and data volumes increased, several critical issues emerged:

Performance Bottlenecks: Standard file processing took 4-6 hours per job, while historical data loads required up to 20 hours to complete. Furthermore, large customer files could even take an entire day to process.

Operational Inefficiencies: To ingest new data, each customer required custom Python ETL scripts. So, for example, 16 clients meant managing 16 separate workflows. There was no standardized process for onboarding new customers, and system updates required downtime, preventing timely file processing.

Cost and Scalability Concerns: PostgreSQL costs were escalating with data growth, while the ability to handle increasing data volumes was becoming increasingly limited. Late data processing created downstream delays for customers as well as the inability to quickly test and deploy new features.

The Solution

SunnyData designed and implemented a Databricks-based solution that maintained the existing Airflow orchestration layer while modernizing data processing capabilities.

The foundation of the new architecture centered on a unified Databricks lakehouse that replaced the fragmented PostgreSQL databases. This platform implemented a medallion architecture with Bronze, Silver, and Gold data layers, while Unity Catalog established comprehensive data governance and security controls.

The team replaced separate customer-specific workflows with a single, intelligent processing framework using Databricks Asset Bundles (DABs). SunnyData built dynamic preprocessing capabilities that automatically detect and handle different customer file formats, using configuration files rather than custom code to accommodate client-specific requirements, reducing the complexity of onboarding new customers.

The system includes schema evolution handling, allowing the client to adapt to changing data requirements without major code rewrites. Integration with Databricks FeatureStore created a bridge to their existing Kubernetes ML models, ensuring continuity in their AI-driven insurance analytics while opening the door for future ML platform consolidation.

Key Benefits Achieved

Processing times dropped from 4-6 hours to 15 minutes for standard jobs and from 20 hours to under 7 minutes for historical data loads. High-volume clients saw processing time reduced from 24 hours to 20 minutes, enabling same-day customer responses even when data arrives late.

By consolidating workflows into a single standardized process, we eliminated maintenance overhead and simplified new customer onboarding. Zero-downtime deployments and 7-minute validation cycles now enable rapid testing and feature deployment.

A 60% reduction in data infrastructure costs was achieved through PostgreSQL optimization and Databricks efficiency gains, delivering better performance at substantially lower cost.

SunnyData’s migration approach delivered immediate performance improvements while establishing a scalable foundation for continued growth. By preserving existing investments while modernizing core data processing capabilities, the solution transformed operational efficiency and positioned the business to innovate faster and serve customers more effectively.